Mastering CUDA and High-Performance Computing, Part I

A Deep Dive from Compiler Internals to High-Performance Parallel Computing

A Personal Journey into GPU Computing

I still remember November 12, 2024, with a clarity that surprises even me to this day, even though more than a year has already passed.

I had spent the evening lost in LLVM internals, tracing the intricate dance between front-end parsing, IR transformations, optimization passes, and backend code generation.

I explored FunctionPassManager sequences, observed GVN, SROA, LoopVectorization, Instruction Combining, and Dead Code Elimination, and tried to correlate these transformations with register pressure, live interval analysis, and instruction scheduling heuristics.

Every detail, every pass, seemed like a miniature universe of logic, optimization, and constraint satisfaction.

I had always been fascinated by the intersection of software, hardware, and system infrastructure; but until that night, I had never realized just how foundational and deep that connection could be.

I was thinking about compilers, IR, and abstract execution models, but I had no sense yet of how these abstractions directly map to silicon at massive scale.

Then I stumbled upon an article about DeepSeek R1, the PTX intermediate representation, and the orchestration of thousands of threads across NVIDIA GPUs’ Streaming Multiprocessors (SMs).

It felt like stepping through a door into a world I had only glimpsed. Almost instantly, I found myself in the middle of a global conversation: for days, forums, blogs, and even financial news outlets buzzed about DeepSeek.

Developers dissected PTX, debated its scheduling optimizations, speculated about the next wave of AI workloads, and NVIDIA’s stock price swung dramatically.

While most people were caught up in hype, speculation, or high-level geopolitical and financial implications, I became obsessed with the core layer: the base of the software, the invisible threads connecting high-level code to the hardware executing it.

I devoured every whitepaper, blog post, SDK guide, and forum discussion I could find, trying to understand exactly how PTX bridges high-level CUDA kernels with the underlying SM pipelines, how execution is scheduled across warps, and how memory hierarchies (register files, shared memory banks, L1/L2 caches, and DRAM) are orchestrated at a microscopic level.

At that time, I barely understood what a GPU did beyond simple graphics acceleration or using high-level CUDA frameworks. But the article illuminated something crucial: modern computational performance isn’t just about clever algorithms.

It is about how software maps onto hardware through multiple layers of compilation and execution, aspects that most programmers, including myself until that night, rarely consider.

I realized that to truly understand high-performance GPU computing, I needed to trace the entire execution path: from CUDA C++ kernels, through LLVM IR transformations, into PTX, and finally into the Streaming Multiprocessor pipelines themselves.

Suddenly, my abstract fascination with compilers and infrastructure became a tangible, almost physical journey.

Every kernel, every loop, every thread had a story. I could begin to see how thread indexing (threadIdx, blockIdx, blockDim) maps logically to warps, how memory coalescing affects throughput, how shared memory bank conflicts serialize execution, and how predication avoids warp divergence penalties.

I was no longer just reading about GPUs; I was entering their world thread by thread, instruction by instruction, tracing the invisible logic that transforms high-level abstractions into thousands of coordinated instruction streams flowing through silicon.

That night marked the start of a transformation in my understanding. It wasn’t merely academic curiosity anymore; it was a journey into the layered reality of modern computing, where compiler theory, PTX abstractions, and microarchitectural details converge to define what is actually possible on a GPU.

And it was the beginning of the series you’re reading: a deep dive into CUDA, LLVM IR, PTX, and SM execution, told from the perspective of someone who has traced every layer, experimentally and obsessively, to understand not just how GPUs compute, but why.

My LLVM odyssey

Before GPUs became the core focus of my studies, I was already deeply immersed in software infrastructure, with a singular fascination: compilers.

Their ability to transform high-level code into highly optimized machine instructions, managing dependencies, scheduling, and register allocation, had always captivated me: almost like engineering alchemy, where abstract logic crystallizes into finely tuned execution on silicon.

I spent months dissecting LLVM’s internals, tracing each stage of the pipeline.

At the front-end, I studied clang’s parsing, type-checking, and LLVM IR generation, observing how control flow graphs, SSA form, and metadata are preserved to represent program semantics accurately.

LLVM IR became a playground of possibilities, allowing multiple layers of analysis, transformation, and optimization without committing to a target architecture.

Next came the optimization passes. I followed the FunctionPassManager, analyzing pass ordering and dependencies.

I explored Global Value Numbering (GVN) to identify equivalent computations, Scalar Replacement of Aggregates (SROA) to break down complex structures, and LoopVectorization to exploit SIMD execution patterns.

Instruction Combining and Dead Code Elimination (DCE) revealed how small IR-level transformations propagate through instruction scheduling, register pressure, and memory accesses.

Diving deeper, I mapped IR onto hardware targets through InstructionSelector DAGs, examining how LLVM matches abstract operations to target-specific opcodes, considers RegisterClass constraints, and queries TargetTransformInfo to evaluate instruction latency, throughput, and memory cost models.

I traced live interval analysis in relation to register allocation heuristics, noting how spilling, rematerialization, and coalescing decisions affect execution efficiency: critical insights for architectures with thousands of concurrent threads.

Predication and control-flow lowering fascinated me: conditional branches in IR could be transformed into predicated instructions, minimizing pipeline stalls.

Loop transformations (unrolling, interchange, fusion, and vectorization) demonstrated the delicate balance between instruction-level parallelism, memory alignment, and cache behavior.

Each IR pass could increase or decrease register pressure, affect instruction scheduling, or modify memory footprint, directly influencing runtime performance.

Even then, I did not anticipate how this knowledge would later intersect with GPU architectures.

LLVM IR transformations, optimization passes, and backend heuristics were abstract exercises; but they had prepared me to reason about CUDA kernels, PTX intermediate representation, warp scheduling, shared memory layouts, and SM pipelines.

Every abstraction, every instruction, and every scheduling decision became a lens through which I could understand how software maps efficiently onto massively parallel hardware.

The evening of discovery: PTX and DeepSeek

January 20, 2025. That evening remains etched in my memory. After hours lost in LLVM’s inner workings, by tracing IR transformations, exploring FunctionPassManager sequences, and correlating register allocation with live interval analysis, I stumbled upon the DeepSeek R1 article.

The moment felt surreal: everything I had been exploring in software infrastructure suddenly collided with the raw power of hardware parallelism.

The article unpacked PTX, NVIDIA’s virtual ISA, and detailed how thousands of threads execute across warps on a Streaming Multiprocessor (SM).

Each warp, a bundle of 32 threads in SIMT execution, follows the instruction scheduler, hiding latency by rapidly switching between warps.

PTX abstracts this complexity: developers write kernels in CUDA C++, compile them with NVCC, which emits PTX, and the NVIDIA driver JIT-compiles the PTX into device-specific cubins streamed into the SM pipelines.

PTX fascinated me for several reasons:

Forward compatibility: A PTX kernel compiled today could run on next-generation GPUs via JIT compilation, bridging software longevity and hardware evolution.

Hardware abstraction: Kernels could be written without intimate knowledge of specific SM layouts, register files, or shared memory bank conflicts.

Optimization opportunities: Despite abstraction, peak performance still demanded deep awareness of occupancy, memory coalescing, shared memory conflicts, warp divergence, and pipeline latencies.

The parallels with LLVM were striking. LLVM transforms high-level C++ into IR, applies passes like GVN, SROA, LoopVectorization, Instruction Combining, and Dead Code Elimination, and lowers code to target-specific instructions.

PTX, on the other hand, expresses parallel execution semantics independently of final hardware mapping. Both systems separate abstraction from execution yet require deep knowledge to optimize performance.

I sat tracing mental parallels between LLVM’s DAG-based instruction selection and PTX’s warp-scheduled execution. LLVM must reason about register pressure, instruction latency, predication, and control-flow lowering.

PTX, in turn, demands understanding warp occupancy, coalesced memory accesses, shared memory bank conflicts, and L1/L2 cache interplay with DRAM.

That night, DeepSeek and PTX became more than concepts. They bridged my compiler obsession with high-performance GPU computing.

I realized that to truly understand CUDA, I needed to follow the full path: high-level CUDA kernels → NVCC → LLVM IR → PTX → cubins → SM pipelines, where thousands of threads coordinate in lockstep to perform computations that would overwhelm any CPU.

Tracing CUDA Kernels, PTX, and the Hardware Dance

When I first started writing CUDA kernels, my experiments were deliberately simple: matrix addition, vector scaling, small convolution operations. I wanted to see, concretely, how high-level code transformed as it moved down the compilation chain.

I compiled these kernels and examined the PTX emitted by NVCC. The experience was revelatory, almost like peeling back a layer of abstraction to reveal a hidden parallel universe of computation.

PTX exposed the fundamental building blocks of GPU execution:

Thread indexing: Registers like

threadIdx,blockIdx, andblockDimdefine each thread’s unique identity in a multidimensional grid. Observing PTX code, I could see how each thread calculates its global index using arithmetic derived from block and grid dimensions.Memory coalescing: PTX made it clear how critical memory alignment is. Threads within a warp that access contiguous addresses can combine requests into a single transaction, dramatically increasing throughput. Misalignment, however, causes serialized transactions, stalls, and pipeline bubbles.

Shared memory and bank conflicts: Threads in a block share memory, divided into banks. I could observe how multiple threads attempting to access the same bank would serialize operations, a subtle yet crucial bottleneck.

Predication: Divergent branches are another warp-level concern. PTX can transform conditional execution into predicated instructions, effectively executing both paths but masking results for non-taken threads. Seeing divergent loops lowered into predicated instructions showed me how compiler heuristics, IR transforms, and PTX scheduling work together for correct, parallel execution.

I remember embedding inline PTX inside simple kernels, controlling instruction ordering, memory patterns, and even warp-level operations that CUDA C++ itself could not expose.

It was a hands-on lesson: the GPU is not just a black box for parallelism; it is a layered ecosystem of instruction streams, memory banks, and scheduling heuristics, all orchestrated to maximize throughput.

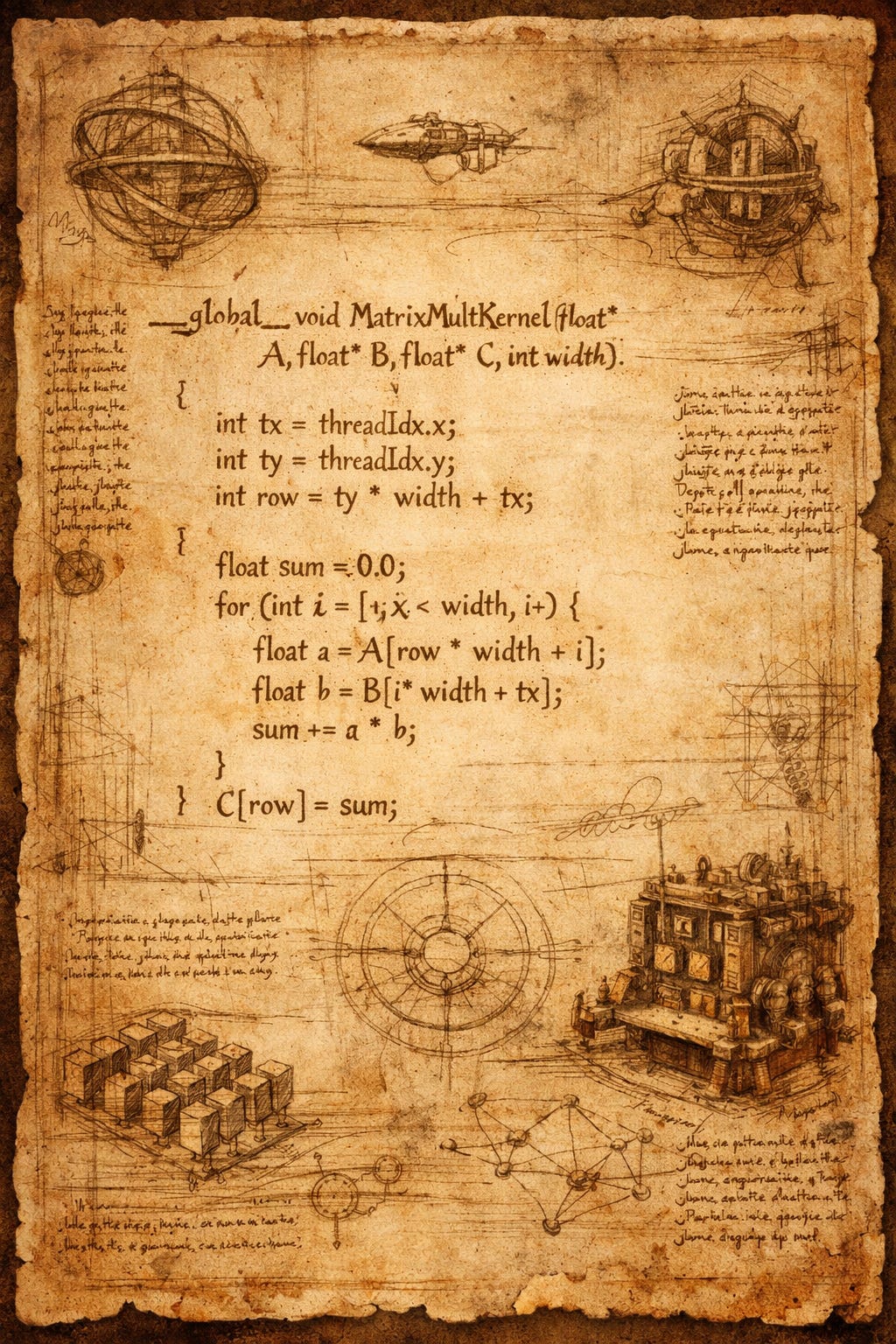

Matrix Multiplication and Warp-Level Optimization

After mastering vector addition, I wanted a more challenging experiment — something that truly tested the GPU’s compute and memory subsystems. I chose matrix multiplication, the classic computational kernel in high-performance computing, AI, and scientific simulations.

The first naïve implementation looked like this:

__global__ void matMulNaive(float* A, float* B, float* C, int N) {

int row = threadIdx.y + blockIdx.y * blockDim.y;

int col = threadIdx.x + blockIdx.x * blockDim.x;

if(row < N && col < N){

float sum = 0.0f;

for(int k = 0; k < N; ++k){

sum += A[row * N + k] * B[k * N + col];

}

C[row * N + col] = sum;

}

}At a high level, this seems straightforward: each thread computes a single element of the result matrix.

But my experience with vector addition had taught me to look deeper, beyond correctness, into how this kernel would map onto PTX and SM pipelines.

LLVM IR insights

I compiled the kernel with NVCC targeting PTX and examined the LLVM IR output. Immediately, several patterns emerged:

Nested loops are represented as IR loops: The outer loops over rows and columns map directly to thread indexing arithmetic. The inner loop over

kis a canonical loop withphinodes tracking the accumulatorsum.SSA form and virtual registers: Every variable (

row,col,k,sum) exists as a virtual register, yet LLVM’s optimization passes can combine, eliminate, or spill them based on usage patterns.Load/store separation: Every access to

A[row * N + k]andB[k * N + col]generates explicitloadinstructions. LLVM can apply scalar replacement or loop-invariant code motion, lifting certain computations out of loops to reduce redundant instructions.

I remember one night sitting with my IR and a notebook, tracing the phi nodes and loop unrolling transformations. I observed how a small tweak in IR, say unrolling the inner loop by 2, could double the number of live registers per thread.

I immediately understood that naive unrolling without accounting for register pressure could reduce occupancy, a lesson painfully learned with vector addition.

PTX, a parallel symphony

After IR, I looked at the generated PTX. The arithmetic, loads, and stores became explicit thread-local instructions:

mul.lo.u32 %row_idx, %blockIdx.y, %blockDim.y

add.u32 %row, %row_idx, %threadIdx.y

mul.lo.u32 %col_idx, %blockIdx.x, %blockDim.x

add.u32 %col, %col_idx, %threadIdx.x

setp.ge.u32 %p0, %row, %N

setp.ge.u32 %p1, %col, %N

or.pred %p2, %p0, %p1

@%p2 bra DONE

mov.f32 %sum, 0.0

LOOP:

ld.global.f32 %a, [A + %row*N + %k]

ld.global.f32 %b, [B + %k*N + %col]

fma.rn.f32 %sum, %a, %b, %sum

add.u32 %k, %k, 1

setp.lt.u32 %cond, %k, %N

@%cond bra LOOP

st.global.f32 [C + %row*N + %col], %sum

DONE:

I was fascinated by several features:

Fused multiply-add (FMA) instructions: PTX automatically uses FMA when possible, maximizing FLOPs per cycle.

Predicated branches: Out-of-bounds threads are masked off, avoiding unnecessary execution while keeping warps aligned.

Thread indexing arithmetic: Derived directly from

threadIdx,blockIdx, andblockDim. Every PTX instruction respects these indices to maintain correctness across thousands of threads.

But even this PTX was naive. Each thread individually loaded elements of A and B from global memory. I knew from my vector addition experiments that misaligned global accesses and low arithmetic intensity would throttle performance.

Shared memory tiling

The next step was introducing shared memory tiling, a standard GPU optimization. I rewrote the kernel to load tiles of A and B into shared memory, perform the multiply-accumulate locally, and then write back the result:

__global__ void matMulTiled(float* A, float* B, float* C, int N){

__shared__ float As[32][32];

__shared__ float Bs[32][32];

int row = threadIdx.y + blockIdx.y*blockDim.y;

int col = threadIdx.x + blockIdx.x*blockDim.x;

float sum = 0.0f;

for(int tile=0; tile < N/32; ++tile){

As[threadIdx.y][threadIdx.x] = A[row*N + tile*32 + threadIdx.x];

Bs[threadIdx.y][threadIdx.x] = B[(tile*32 + threadIdx.y)*N + col];

__syncthreads();

for(int k=0; k<32; ++k)

sum += As[threadIdx.y][k] * Bs[k][threadIdx.x];

__syncthreads();

}

C[row*N + col] = sum;

}Here, I could directly control shared memory layout, a factor invisible at the high-level IR stage. I experimented with bank conflicts, adjusting tile strides to avoid multiple threads accessing the same bank simultaneously.

The performance gains were immediate: bandwidth utilization improved, and warp stalls due to serialized accesses dropped dramatically.

Warp scheduling, occupancy, and register pressure

Next, I traced the PTX generated by the tiled kernel. The number of live registers increased because each thread now held sum, multiple shared memory references, and loop counters. I correlated this with SM occupancy:

Registers per thread: Increasing them beyond ~64 per thread reduced active warps per SM from 64 to 32.

Warp interleaving: Fewer active warps reduced the hardware’s ability to hide memory latency.

Shared memory allocation: Large tiles per block reduced the number of blocks that could reside concurrently on an SM.

These experiments mirrored what I had seen in LLVM: IR-level decisions cascade all the way to warp execution and memory latency hiding.

Inline PTX Experiments in Matrix Multiplication

I pushed further, embedding inline PTX inside tiled kernels:

asm volatile(

"ld.shared.f32 %fA, [As + %threadIdx.y*32 + %k];\n\t"

"ld.shared.f32 %fB, [Bs + %k*32 + %threadIdx.x];\n\t"

"fma.rn.f32 %sum, %fA, %fB, %sum;\n\t"

);This allowed me to:

Test instruction ordering and latency hiding manually.

Experiment with predication and divergent threads, observing how small PTX-level changes could improve warp execution.

Measure shared memory bank conflicts in real time, adjusting indexing to maximize throughput.

I could see the subtle interplay between LLVM IR choices, PTX transformations, and hardware execution, solidifying my understanding that GPU performance is a multi-layered orchestration.

My deep experiments

I approached CUDA like a lab. I experimented relentlessly:

Observing how LLVM IR loop unrolling affects PTX instruction counts and warp efficiency.

Measuring occupancy changes as kernel launch parameters and register usage were modified.

Embedding inline PTX to explore instruction scheduling, shared memory bank conflicts, and predication.

Mapping thread blocks to SMs to study warp interleaving and latency hiding.

These experiments made abstract compiler and microarchitectural concepts tangible. Every IR pass, PTX instruction, and memory layout decision was observable in execution behavior.

I saw clearly how software abstractions orchestrate silicon, and how performance is a delicate balance of register allocation, memory coalescing, and warp-level scheduling.

Why this series exists

By the end of this journey, I knew why I had to write this series. I wanted more than a cursory “launch a kernel” tutorial. My goal is a narrative-driven, technically rigorous exploration of CUDA:

LLVM IR transformations and compiler mechanics.

PTX intermediate representation and optimization strategies.

CUDA kernel design, thread blocks, and warps.

GPU microarchitecture, SM pipelines, and memory hierarchy.

Real-world performance strategies, from occupancy tuning to memory coalescing.

This series is my attempt to bridge software and hardware, guiding readers through the journey I experienced.

Curiosity sparked by DeepSeek, insights crystallized by LLVM, experiments refined in PTX, and understanding solidified by GPU microarchitecture.

Readers will trace kernels from CUDA C++ to LLVM IR, into PTX, and finally to SM execution, experiencing the choreography of parallel computation firsthand.

You will see not only how to write CUDA code, but why each instruction executes as it does, how threads collaborate within warps, and how to extract maximum performance by understanding the deep interplay of compiler optimizations, PTX abstractions, and hardware realities.

Enjoy this incredible journey!